The global legal landscape in 2026 is defined by a singular tension: the speed of Large Language Models (LLMs) versus the slow, deliberate nature of the rule of law. As solicitors and students adopt “Prompt Engineering”—the strategic drafting of inputs to elicit high-quality AI responses—the ethical boundaries are being redrawn. We are no longer asking if AI should be used in law, but rather how it can be used without compromising professional integrity or academic honesty.

Navigating these new tools requires a balance of innovation and academic integrity. Many students find that using structured prompts helps clarify complex case law, much like seeking professional Assignment Help from established platforms like myassignmenthelp to understand the foundational elements of a difficult module. While these tools can outline a skeletal argument or summarize a 50-page judgment in seconds, the ethical weight of the final output rests entirely on the human author. In the legal world, an AI “hallucination”—where the machine invents a non-existent case—is not just a mistake; it is a potential breach of the duty of candour to the court.

The Evolution of the ‘Digital Solicitor’

In 2026, the term “Prompt Engineer” is becoming a standard subset of the legal researcher’s role. It involves “Few-Shot Prompting” (giving the AI examples) and “Iterative Refinement” to drill down into specific statutes. However, the Bar Council and the Solicitors Regulation Authority (SRA) have made it clear: an AI is an assistant, not a practitioner. The “prompt” is essentially a request for a draft, and every word of that draft must be vetted by a human with a practicing certificate.

Table 1: Permitted vs. Prohibited AI Uses in Legal Research (2026 Standards)

| Activity | Status | Ethical Requirement |

| Summarizing Judgments | Allowed | Must be cross-referenced with the original PDF transcript. |

| Drafting Skeleton Arguments | Allowed | Must be heavily edited for “Human Voice” and specific case facts. |

| Primary Case Discovery | Restricted | AI cannot be the sole source of truth; must use a verified legal database. |

| Predicting Case Outcomes | Caution | Risk of bias; cannot be presented as a certainty to a client. |

| Inputting Client Data | Prohibited | Strictly forbidden on public models due to GDPR and privilege. |

The “Hallucination” Crisis and the Duty of Candour

The most significant ethical hurdle remains the “Hallucination” factor. AI models operate on probability, not truth. They predict the next likely word in a sentence, which occasionally leads to the creation of plausible-sounding but entirely fake legal precedents. Under the “Duty of Candour,” a legal professional must be honest with the court. If a student or solicitor submits AI-generated research without checking every single citation against a primary source, they are risking professional discipline, or worse, being struck off the roll.

When tackling high-pressure modules, obtaining specialized Law Assignment Help ensures that the research remains grounded in verified legal precedents rather than AI-generated guesswork.

The 5-Step Ethical Framework for Legal Prompting

To maintain “Topical Authority” in 2026, legal researchers should follow a structured hierarchy of verification.

Diagram: The “Human-in-the-Loop” Verification Pyramid

- Level 1 (Top): Final Human Sign-off (Validation of logic and ethics)

- Level 2: Source Verification (Checking AI citations against Westlaw/LexisNexis)

- Level 3: Contextual Editing (Applying specific jurisdiction rules like UK vs. US law)

- Level 4: Iterative Prompting (Refining the AI output for accuracy)

- Level 5 (Base): Initial AI Draft (The raw prompt engineering output)

Data Privacy: The Silent Ethics Violation

Lawyers have a strict fiduciary duty to keep client information private. A major ethical trap in prompt engineering is “inputting” sensitive case facts into a public AI model. Most public AIs use your prompts to train their future versions. This means if you input a client’s private contract to ask for a summary, you might be leaking privileged information into the public domain.

In 2026, the standard is the use of “Local LLMs” or “Enterprise Tiers” where data is encrypted and not used for training. For law students, this means never uploading an unpublished thesis or a professor’s proprietary lecture notes into an open-source AI.

Transparency: When to Declare AI Assistance?

The ethics of 2026 demand transparency. Some High Court judges now require a formal disclosure statement if AI was used to draft any part of a submission. In the academic world, the rules are even stricter.

- The “Brainstorming” Exception: Using AI to generate a list of potential dissertation topics is generally seen as acceptable.

- The “Drafting” Violation: Submitting an AI-generated essay as your own work is considered academic malpractice.

The key is to use AI for process, not product.

Cognitive Fitness: The Physical Side of Law

There is an emerging trend in 2026 called “Cognitive Fitness.” The mental stamina required to fact-check AI outputs is actually higher than the stamina required to write from scratch. Law students are discovering that a sedentary lifestyle leads to “mental fatigue,” which is when AI errors slip through the cracks.

Integrating physical health routines—much like the fitness philosophies discussed on sites like Befitnatics—has become essential for modern law students. A healthy body supports the sharp, critical eye needed to catch an AI hallucination before it reaches a professor’s desk or a judge’s bench.

The Socio-Economic Ethics of AI

One often overlooked ethical issue is the “Digital Divide.” Prompt engineering is a skill that requires access to high-end, often expensive, AI models. There is a risk that wealthier students or “Magic Circle” law firms will have an unfair advantage over those using free, outdated models. To combat this, universities are beginning to provide “Ethics-Approved” AI tools to all students to ensure a level playing field.

Table 2: Comparing LLM Capabilities for Legal Contexts

| Model Type | Best Use Case | Primary Ethical Risk |

| Public LLM (GPT-Free) | General brainstorming | Data leakage & High Hallucination rates. |

| Legal-Specific AI | Case law discovery | Over-reliance on “closed” logic. |

| Enterprise Tiers | Drafting & Summarization | High cost/Accessibility issues. |

| Local/Offline AI | Highly sensitive data | High technical hardware requirements. |

The Future: A Partnership, Not a Replacement

Will AI replace the junior solicitor? The consensus in 2026 is a resounding “No.” Instead, the market is shifting toward the “Centaur Lawyer”—a professional who is half-human and half-AI in their workflow. The ethics of prompt engineering suggest that the prompt should be a tool for discovery, not a replacement for original thought.

The law is a living, breathing entity shaped by human values, empathy, and social justice—concepts that an algorithm can simulate but never truly “feel.” As we move forward, the most successful law students will be those who master the machine without losing their human judgment.

Conclusion: Mastering the Prompt

The ethics of prompt engineering boil down to one word: Accountability. Whether you are a student using AI to structure a complex argument or a partner at a firm summarizing a merger, you are the “Editor-in-Chief” of that content. By utilizing AI as a research assistant while maintaining the rigorous standards of Law Assignment Help and expert peer review, the legal community can embrace technology without losing its soul.

The goal isn’t to work harder; it’s to work smarter while staying within the lines of professional conduct. In 2026, the best lawyers aren’t the ones with the fastest prompts—they are the ones with the best judgment.

Frequently Asked Questions

Is using AI for legal research considered professional misconduct?

Using AI is generally permitted as a preliminary research tool. However, it becomes misconduct if a practitioner fails to verify the output, leading to the submission of fabricated citations or inaccurate legal arguments to a court.

How does “prompt engineering” differ from traditional legal searching?

Traditional searching relies on specific keywords and Boolean operators to find documents. Prompt engineering uses natural language instructions to guide an AI through logical reasoning, summarization, and drafting tasks.

What are the primary privacy risks of AI in the legal sector?

The main risk involves inputting sensitive case facts or privileged client information into public AI models. These inputs may be used to train future versions of the software, potentially leaking confidential data into the public domain.

Can law students be penalized for using AI in their dissertations?

Most academic institutions permit AI for brainstorming or structural assistance. However, using it to generate the final text of a thesis without disclosure is typically classified as academic malpractice or plagiarism.

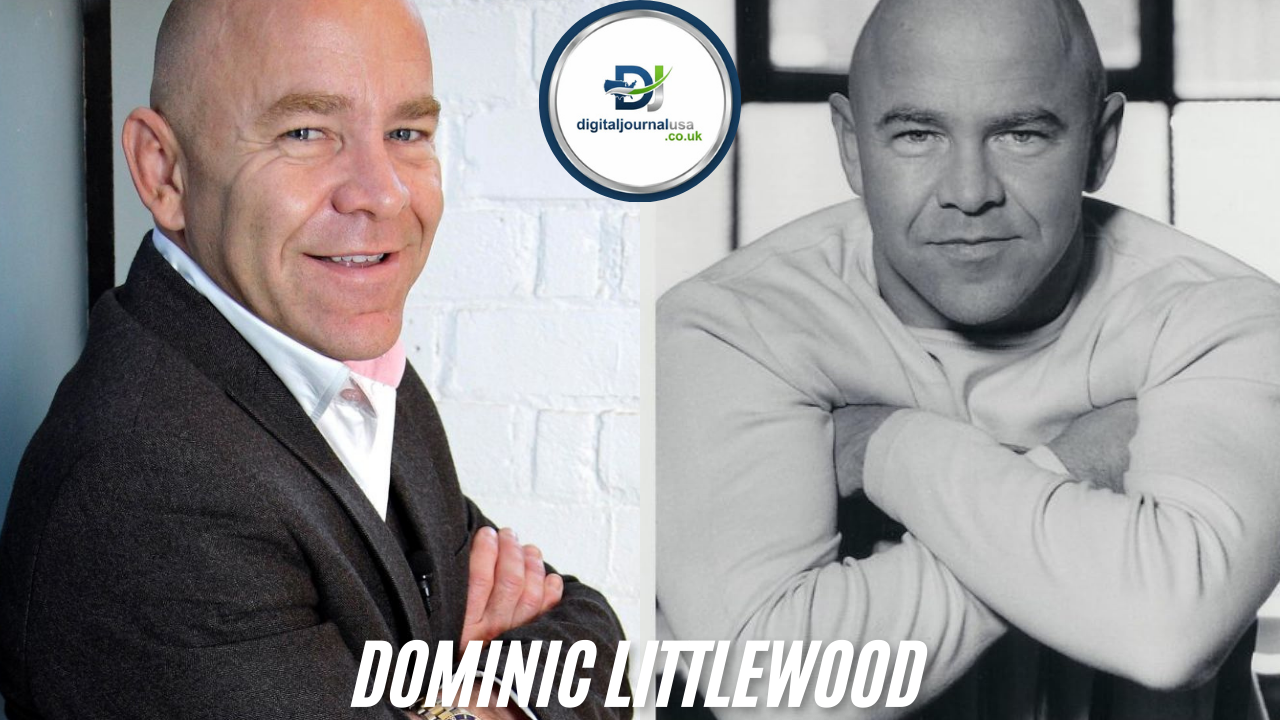

About The Author

Ella Thompson is a dedicated content strategist and academic researcher at MyAssignmentHelp. With a focus on the intersection of emerging technology and higher education, Ella specializes in navigating the ethical complexities of AI integration within the legal and academic sectors to help students achieve long-term success.